The Science of Time Travel: The Secrets Behind Time Machines, Time Loops, Alternate Realities, and More!  Learn on the go with our new app. Now customize the name of a clipboard to store your clips. But, even if you dont have experience with combinators or Spark, well cover enough examples of Kafka Streams Transformations in this post for you to feel comfortable and gain confidence through hands-on experience. As previously mentioned, stateful transformations depend on maintainingthe state of the processing. However, there were still a few concerns to be addressed: Decoupling: We want to perform the computation and cache update in a separate work stream from the one that responds to the update request. Like the repartition topic, the changelog topic is an internal topic created by the Kafka Streams framework itself.

Learn on the go with our new app. Now customize the name of a clipboard to store your clips. But, even if you dont have experience with combinators or Spark, well cover enough examples of Kafka Streams Transformations in this post for you to feel comfortable and gain confidence through hands-on experience. As previously mentioned, stateful transformations depend on maintainingthe state of the processing. However, there were still a few concerns to be addressed: Decoupling: We want to perform the computation and cache update in a separate work stream from the one that responds to the update request. Like the repartition topic, the changelog topic is an internal topic created by the Kafka Streams framework itself.  A brief overview of the above code snippet: In theory, all looked good, and an existing Kafka Streams application having nearly the same logic working well in production increased our confidence in this solution. However we are also immediately deleting records from the table after inserting them, since we don't want the table to grow and the Debezium connector will see the inserts regardless. Lets create a message binding interface: Then assuming that you have Kafka broker running under localhost:9092 . Liftoff: Elon Musk and the Desperate Early Days That Launched SpaceX, Bitcoin Billionaires: A True Story of Genius, Betrayal, and Redemption, The Players Ball: A Genius, a Con Man, and the Secret History of the Internet's Rise, Driven: The Race to Create the Autonomous Car, Lean Out: The Truth About Women, Power, and the Workplace, A World Without Work: Technology, Automation, and How We Should Respond. The intention is to show creating multiple new records for each input record. For example, if we receive 4 messages like aaabbb , bbbccc , bbbccc , cccaaa with a cap set to 7. To perform aggregation based on customerId, Our expectation of window-based aggregation was that for each key we would receive the results in the downstream Processor nodes strictly after the expiration of the window. SlideShare uses cookies to improve functionality and performance, and to provide you with relevant advertising. However, a significant deviation with the Session Recordings feature was the size of the payload and latency requirements. Use it to produce zero, one or more records fromeach input recordprocessed. The Adaptive MACDCoding Technical Indicators. periodic actions can be performed. This is a stateful record-by-record operation, i.e, transform(Object, Object) is invoked individually for each record of a stream and can access and modify Transformer (provided by the given Lets define a method initializeStateStores where we will intercept the builder, and create our desired state store: Woah, woah, lets slow down! Call initializeStateStores method from our requestListener : We need to initialize our CustomProcessor in KStream . https://kafka.apache.org/21/documentation/streams/developer-guide/dsl-api.html#applying-processors-and-transformers-processor-api-integration. Do let me know if you have any questions, comments or ideas for improvement. Because I can!). APIdays Paris 2019 - Innovation @ scale, APIs as Digital Factories' New Machi Mammalian Brain Chemistry Explains Everything. org.apache.kafka.streams.processor.Punctuator#punctuate(long), a schedule must be registered. GetYourGuide is the booking platform for unforgettable travel experiences. So we opted to precompute this payload whenever the underlying data changed, and store the result in a cache so it can be retrieved quickly every time after that. This is a stateless Kafka Streams Transformation Examples featured image:https://pixabay.com/en/dandelion-colorful-people-of-color-2817950/. Apache Kafka from 0.7 to 1.0, History and Lesson Learned. Where `flatMap` may produce multiple records from a single input record, `map` is used to produce a single output record from an input record. But, lets get started. We also want to test it, right? The latter is the default in most other databases and is commonly recommended as the default for Spring services anyway. KStream. So, when we had to implement the VWO Session Recordings feature for the new Data platform, Kafka was a logical choice, with Kafka Streams framework doing all the heavy lifting involved with using Kafka Consumer API, allowing us to focus on the data processing part. Here is the list of our gradle dependencies (I uploaded a completely working project to my Github, the link is posted at the end of this article): Once all dependencies are imported. It will be beneficial to both, people who work with Kafka Streams, and people who are integrating Kafka Streams with their Spring applications. original stream based o, Set a new key (with possibly new type) for each input record. AI and Machine Learning Demystified by Carol Smith at Midwest UX 2017, Pew Research Center's Internet & American Life Project, Harry Surden - Artificial Intelligence and Law Overview, Pinot: Realtime Distributed OLAP datastore, How to Become a Thought Leader in Your Niche, UX, ethnography and possibilities: for Libraries, Museums and Archives, Winners and Losers - All the (Russian) President's Men, No public clipboards found for this slide, Streaming all over the world Real life use cases with Kafka Streams, Autonomy: The Quest to Build the Driverless CarAnd How It Will Reshape Our World, Bezonomics: How Amazon Is Changing Our Lives and What the World's Best Companies Are Learning from It, So You Want to Start a Podcast: Finding Your Voice, Telling Your Story, and Building a Community That Will Listen, The Future Is Faster Than You Think: How Converging Technologies Are Transforming Business, Industries, and Our Lives, SAM: One Robot, a Dozen Engineers, and the Race to Revolutionize the Way We Build, Talk to Me: How Voice Computing Will Transform the Way We Live, Work, and Think, Everybody Lies: Big Data, New Data, and What the Internet Can Tell Us About Who We Really Are, Life After Google: The Fall of Big Data and the Rise of the Blockchain Economy, Live Work Work Work Die: A Journey into the Savage Heart of Silicon Valley, Future Presence: How Virtual Reality Is Changing Human Connection, Intimacy, and the Limits of Ordinary Life, From Gutenberg to Google: The History of Our Future, The Basics of Bitcoins and Blockchains: An Introduction to Cryptocurrencies and the Technology that Powers Them (Cryptography, Derivatives Investments, Futures Trading, Digital Assets, NFT), Wizard:: The Life and Times of Nikolas Tesla, Second Nature: Scenes from a World Remade, Test Gods: Virgin Galactic and the Making of a Modern Astronaut, A Brief History of Motion: From the Wheel, to the Car, to What Comes Next, The Metaverse: And How It Will Revolutionize Everything, An Ugly Truth: Inside Facebooks Battle for Domination, System Error: Where Big Tech Went Wrong and How We Can Reboot, The Wires of War: Technology and the Global Struggle for Power, The Quiet Zone: Unraveling the Mystery of a Town Suspended in Silence. Are you interested in joining our engineering team? As a result, the Kafka Streams framework is forced to perform a repartition operation (similar to the shuffle step in the Map/Reduce paradigm). Dynamically materialize this stream to topics using the provided Produced RabbitMQ with fanout processing). In one of our earlier blog posts, we discussed how the windowing and aggregation features of Kafka Streams allowed us to aggregate events in a time interval and reduce update operations on a database. SlideShare uses cookies to improve functionality and performance, and to provide you with relevant advertising. [Apache Kafka Meetup by Confluent] Graph-based stream processing, ksqlDB , Kafka Streams State Stores Being Persistent, Sources Sinks Confluent Cloud , Serverless Stream Processing with Bill Bejeck, Stream Processing Confluent Cloud , Understanding Apache Kafka Latency at Scale, Be A Great Product Leader (Amplify, Oct 2019), Trillion Dollar Coach Book (Bill Campbell). (both key and value. It can also become a necessity in situations when you have to adhere to quotas and limits. and have similarities to functional combinators found in languages such as Scala. Need to learn more about Kafka Streams in Java? Copyright Wingify. `valFilter` is set to MN in the Spec class. Marks the stream for data re-partitioning: we are using both `flatMap` from Kafka Streams as well as `flatMap` from Scala.

A brief overview of the above code snippet: In theory, all looked good, and an existing Kafka Streams application having nearly the same logic working well in production increased our confidence in this solution. However we are also immediately deleting records from the table after inserting them, since we don't want the table to grow and the Debezium connector will see the inserts regardless. Lets create a message binding interface: Then assuming that you have Kafka broker running under localhost:9092 . Liftoff: Elon Musk and the Desperate Early Days That Launched SpaceX, Bitcoin Billionaires: A True Story of Genius, Betrayal, and Redemption, The Players Ball: A Genius, a Con Man, and the Secret History of the Internet's Rise, Driven: The Race to Create the Autonomous Car, Lean Out: The Truth About Women, Power, and the Workplace, A World Without Work: Technology, Automation, and How We Should Respond. The intention is to show creating multiple new records for each input record. For example, if we receive 4 messages like aaabbb , bbbccc , bbbccc , cccaaa with a cap set to 7. To perform aggregation based on customerId, Our expectation of window-based aggregation was that for each key we would receive the results in the downstream Processor nodes strictly after the expiration of the window. SlideShare uses cookies to improve functionality and performance, and to provide you with relevant advertising. However, a significant deviation with the Session Recordings feature was the size of the payload and latency requirements. Use it to produce zero, one or more records fromeach input recordprocessed. The Adaptive MACDCoding Technical Indicators. periodic actions can be performed. This is a stateful record-by-record operation, i.e, transform(Object, Object) is invoked individually for each record of a stream and can access and modify Transformer (provided by the given Lets define a method initializeStateStores where we will intercept the builder, and create our desired state store: Woah, woah, lets slow down! Call initializeStateStores method from our requestListener : We need to initialize our CustomProcessor in KStream . https://kafka.apache.org/21/documentation/streams/developer-guide/dsl-api.html#applying-processors-and-transformers-processor-api-integration. Do let me know if you have any questions, comments or ideas for improvement. Because I can!). APIdays Paris 2019 - Innovation @ scale, APIs as Digital Factories' New Machi Mammalian Brain Chemistry Explains Everything. org.apache.kafka.streams.processor.Punctuator#punctuate(long), a schedule must be registered. GetYourGuide is the booking platform for unforgettable travel experiences. So we opted to precompute this payload whenever the underlying data changed, and store the result in a cache so it can be retrieved quickly every time after that. This is a stateless Kafka Streams Transformation Examples featured image:https://pixabay.com/en/dandelion-colorful-people-of-color-2817950/. Apache Kafka from 0.7 to 1.0, History and Lesson Learned. Where `flatMap` may produce multiple records from a single input record, `map` is used to produce a single output record from an input record. But, lets get started. We also want to test it, right? The latter is the default in most other databases and is commonly recommended as the default for Spring services anyway. KStream. So, when we had to implement the VWO Session Recordings feature for the new Data platform, Kafka was a logical choice, with Kafka Streams framework doing all the heavy lifting involved with using Kafka Consumer API, allowing us to focus on the data processing part. Here is the list of our gradle dependencies (I uploaded a completely working project to my Github, the link is posted at the end of this article): Once all dependencies are imported. It will be beneficial to both, people who work with Kafka Streams, and people who are integrating Kafka Streams with their Spring applications. original stream based o, Set a new key (with possibly new type) for each input record. AI and Machine Learning Demystified by Carol Smith at Midwest UX 2017, Pew Research Center's Internet & American Life Project, Harry Surden - Artificial Intelligence and Law Overview, Pinot: Realtime Distributed OLAP datastore, How to Become a Thought Leader in Your Niche, UX, ethnography and possibilities: for Libraries, Museums and Archives, Winners and Losers - All the (Russian) President's Men, No public clipboards found for this slide, Streaming all over the world Real life use cases with Kafka Streams, Autonomy: The Quest to Build the Driverless CarAnd How It Will Reshape Our World, Bezonomics: How Amazon Is Changing Our Lives and What the World's Best Companies Are Learning from It, So You Want to Start a Podcast: Finding Your Voice, Telling Your Story, and Building a Community That Will Listen, The Future Is Faster Than You Think: How Converging Technologies Are Transforming Business, Industries, and Our Lives, SAM: One Robot, a Dozen Engineers, and the Race to Revolutionize the Way We Build, Talk to Me: How Voice Computing Will Transform the Way We Live, Work, and Think, Everybody Lies: Big Data, New Data, and What the Internet Can Tell Us About Who We Really Are, Life After Google: The Fall of Big Data and the Rise of the Blockchain Economy, Live Work Work Work Die: A Journey into the Savage Heart of Silicon Valley, Future Presence: How Virtual Reality Is Changing Human Connection, Intimacy, and the Limits of Ordinary Life, From Gutenberg to Google: The History of Our Future, The Basics of Bitcoins and Blockchains: An Introduction to Cryptocurrencies and the Technology that Powers Them (Cryptography, Derivatives Investments, Futures Trading, Digital Assets, NFT), Wizard:: The Life and Times of Nikolas Tesla, Second Nature: Scenes from a World Remade, Test Gods: Virgin Galactic and the Making of a Modern Astronaut, A Brief History of Motion: From the Wheel, to the Car, to What Comes Next, The Metaverse: And How It Will Revolutionize Everything, An Ugly Truth: Inside Facebooks Battle for Domination, System Error: Where Big Tech Went Wrong and How We Can Reboot, The Wires of War: Technology and the Global Struggle for Power, The Quiet Zone: Unraveling the Mystery of a Town Suspended in Silence. Are you interested in joining our engineering team? As a result, the Kafka Streams framework is forced to perform a repartition operation (similar to the shuffle step in the Map/Reduce paradigm). Dynamically materialize this stream to topics using the provided Produced RabbitMQ with fanout processing). In one of our earlier blog posts, we discussed how the windowing and aggregation features of Kafka Streams allowed us to aggregate events in a time interval and reduce update operations on a database. SlideShare uses cookies to improve functionality and performance, and to provide you with relevant advertising. [Apache Kafka Meetup by Confluent] Graph-based stream processing, ksqlDB , Kafka Streams State Stores Being Persistent, Sources Sinks Confluent Cloud , Serverless Stream Processing with Bill Bejeck, Stream Processing Confluent Cloud , Understanding Apache Kafka Latency at Scale, Be A Great Product Leader (Amplify, Oct 2019), Trillion Dollar Coach Book (Bill Campbell). (both key and value. It can also become a necessity in situations when you have to adhere to quotas and limits. and have similarities to functional combinators found in languages such as Scala. Need to learn more about Kafka Streams in Java? Copyright Wingify. `valFilter` is set to MN in the Spec class. Marks the stream for data re-partitioning: we are using both `flatMap` from Kafka Streams as well as `flatMap` from Scala.  Securing Kafka At Zendesk (Joy Nag, Zendesk) Kafka Summit 2020, Welcome to Kafka; Were Glad Youre Here (Dave Klein, Centene) Kafka Summit 2020, Leveraging Microservices and Apache Kafka to Scale Developer Productivity, How to over-engineer things and have fun? the given predicate. State store replication through changelog topics is useful for streaming use cases where the state has to be persisted, but it was not needed for our aggregation use case as we were not persisting state. Stateful transformations, on the other hand, perform a round-trip to kafka broker(s) to persist data transformations as they flow. The way we wanted to batch updates to an external sink for a particular customer's data was to fire an update if either : The batching strategy we wanted to implement was similar to functionality frameworks like Apache Beam provide through the concept of windows and triggers. Personally, I got to the processor API when I needed a custom count based aggregation. Your email address will not be published. provided KeyValueMapperand, Join records of this stream with KTable's records using non-windowed left equi WordCountTransformerSupplier(wordCountsStore.name()), wordCountsStore.name()); Reactive rest calls using spring rest template. Developing a custom Kafka connector? Create a new KStream that consists of all records of this stream which satisfy Hinrik rn Sigursson is a senior backend engineer in the Catalog team. Transform each record of the input stream into zero or more records in the output stream (both key and value type VWO Session Recordings capture all visitor interaction with a website, and the payload size of the Kafka messages is significantly higher than our other applications that use Kafka. A state store instance is created per partition and can be either persistent or in-memory only. Our service is written in Java, with Spring as the application framework and Hibernate as an ORM. Lets define CommandLineRunner where we will initialize simple KafkaProducer and send some messages to our Kafka Streams listener: Then, if you start your application, you should see the following logs in your console: As expected, it aggregated and flushed characters b and c while a:6 is waiting in the state store for more messages. I like to think of it as one-to-one vs the potential for `flatMap` to be one-to-many. Transform the value of each input record into a new value (with possible new In Kafka Streams, these are called state stores and are actually Kafka topics themselves. A VirtualMachine represents a Java virtual machine to which this Java vir, A flow layout arranges components in a left-to-right flow, much like lines of In this overview, hell cover: When providing information about activities for display on our website, our frontend teams have a few requirements: The data must be quick to retrieve, ideally with a single request, Some calculation and aggregation should already be applied to the data.

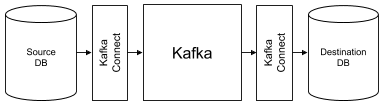

Securing Kafka At Zendesk (Joy Nag, Zendesk) Kafka Summit 2020, Welcome to Kafka; Were Glad Youre Here (Dave Klein, Centene) Kafka Summit 2020, Leveraging Microservices and Apache Kafka to Scale Developer Productivity, How to over-engineer things and have fun? the given predicate. State store replication through changelog topics is useful for streaming use cases where the state has to be persisted, but it was not needed for our aggregation use case as we were not persisting state. Stateful transformations, on the other hand, perform a round-trip to kafka broker(s) to persist data transformations as they flow. The way we wanted to batch updates to an external sink for a particular customer's data was to fire an update if either : The batching strategy we wanted to implement was similar to functionality frameworks like Apache Beam provide through the concept of windows and triggers. Personally, I got to the processor API when I needed a custom count based aggregation. Your email address will not be published. provided KeyValueMapperand, Join records of this stream with KTable's records using non-windowed left equi WordCountTransformerSupplier(wordCountsStore.name()), wordCountsStore.name()); Reactive rest calls using spring rest template. Developing a custom Kafka connector? Create a new KStream that consists of all records of this stream which satisfy Hinrik rn Sigursson is a senior backend engineer in the Catalog team. Transform each record of the input stream into zero or more records in the output stream (both key and value type VWO Session Recordings capture all visitor interaction with a website, and the payload size of the Kafka messages is significantly higher than our other applications that use Kafka. A state store instance is created per partition and can be either persistent or in-memory only. Our service is written in Java, with Spring as the application framework and Hibernate as an ORM. Lets define CommandLineRunner where we will initialize simple KafkaProducer and send some messages to our Kafka Streams listener: Then, if you start your application, you should see the following logs in your console: As expected, it aggregated and flushed characters b and c while a:6 is waiting in the state store for more messages. I like to think of it as one-to-one vs the potential for `flatMap` to be one-to-many. Transform the value of each input record into a new value (with possible new In Kafka Streams, these are called state stores and are actually Kafka topics themselves. A VirtualMachine represents a Java virtual machine to which this Java vir, A flow layout arranges components in a left-to-right flow, much like lines of In this overview, hell cover: When providing information about activities for display on our website, our frontend teams have a few requirements: The data must be quick to retrieve, ideally with a single request, Some calculation and aggregation should already be applied to the data.

The Transformer interface strikes a nice balance between the ease of using Kafka Streams DSL operators and the capabilities of low-level Processor API. ProcessorContext. Therefore, we can improve the scalability of our solution by only updating any cache entry at most every few minutes, to ease the load on our service and database. Here is a caveat that you might understand only after working with Kafka Streams for a while. Dr. Benedikt Linse. Transformer, the state is obtained via the | Oto Brglez, OPALAB. We need to simply call this function in our transform method right after the loop is done: You are probably wondering why transform returns null. Notice that we will flush only two records b:9 and c:9 while record a:6 would be still sitting in the state store of our transformer until more messages arrive. type) of the output rec, Create a new KStream by transforming the value of each record in this stream We call transform method on KStream , then we initialize CustomProcessor in there. org.apache.kafka.streams.processor.Punctuator#punctuate(long). The number of events for that customer exceeded a certain threshold. For example, lets imagine you wish to filter a stream for all keys starting with a particular string in a stream processor. From the Kafka Streams documentation, its important to note. In this case, Kafka Streams doesntrequireknowing the previous events in the stream. Ill try to post more interesting stuff Im working on. Using Kafka as a Database For Real-Time Transaction Processing | Chad Preisle ETL as a Platform: Pandora Plays Nicely Everywhere with Real-Time Data Pipelines. Kafka Streams is a relatively young project that lacks many features that, for example, already exist in Apache Storm (not directly comparable, but oh well). In order to assign a state, the state must be created and registered beforehand: Within the We can adjust the record delay and flush interval of the Kafka transformer, increase the number of Kafka consumers, or even have the Kafka consumer push the aggregated messages to a job queue with different scalability strategies (e.g. We check whether its key is present in our queue.

`flatMap` performs as expected if you have used it before in Spark or Scala. But what about scalability? The following Kafka Streams transformation examples are primarily examples of stateless transformations. We are using In-memory key-value stores for storing aggregation results and have turned off changelog topic-based backup of the state store. Kafka Streams Take on Watermarks and Triggers, Programmatic Authentication under IAP on GCP. It is recommended to watch the short screencast above, before diving into the examples. Since it is a stateless transformation, it will live on a receivers instance i.e. Required fields are marked *. You might also be interested in: Tackling business complexity with strategic domain driven design. Also, using in-memory key-value stores meant that the Kafka Streams application left a minimal footprint on the Kafka cluster. Looks like youve clipped this slide to already. The state store will be created before we initialize our CustomProcessor , all we need is to pass stateStoreName inside it during initialization (more about it later). We needed something above what the Kafka Streams DSL operators offered. Copyright 2011-2021 Javatips.net, all rights reserved. Let's have a look at the code. The topic names, Group the records by their current key into a KGroupedStream while preserving We need to provide stateStoreName to our CustomProcessor , and also to transform method call. and we tested the expected results for filters on sensor-1 and sensor-2 and a default. I do plan to cover aggregating and windowing in a future post. For our use case we need two state stores. It simply performs each filtering operation on the message and moves on. (cf. Define following properties under application.properties : Should be pretty self-descriptive, but let me explain the main parts: Lets enable binding and create a simple stream listener that would print incoming messages: So far, so good! But wait! I was deciding how and what goes to internal topic(s), and I had better control over my data overall. This way, we can retain consistency by writing data in a single transaction on only one data sourceno need to worry about whether our job queue is down at the moment. Here we simply create a new key, value pair with the same key, but an updated value. I think we are done here! Make it shine! Blockchain + AI + Crypto Economics Are We Creating a Code Tsunami? We returned null from the transform() method because we didn't want to forward the records there. Below is the code snippet using the transform() operator. which cache entries need to be updated). How did we move the mountain? With all these changes in place, our system is better decoupled and more resilient, all the while having an up-to-date caching mechanism that scales well and is easily tuned. Lets create a custom, stateful transformer that would aggregate certain letters, and as soon as it reaches a certain cap, it will flush aggregated values down the stream. Batching write operations to a database can significantly increase the write throughput. Should you have any feedback or doubts regarding this article you can share them via comments. A Kafka journey and why migrate to Confluent Cloud? can be altered arbitrarily). Processor API is a low-level KafkaStreams construct which allows for: Using the Processor API requires manually creating the streams Topology, a process that is abstracted away from the users when using standard DSL operators like map(), filter(), reduce(), etc. It then uses Spring's TransactionSynchronization facility to trigger corresponding inserts to the outbox table right before a transaction is committed. Activate your 30 day free trialto continue reading. Developers refer to the processor API when Apache Kafka Streams toolbox doesnt have a right tool for their needs OR they need better control over their data. a state that is available beyond a single call of transform(Object, Object). Now you can start our application, send some messages, and you should see that the messages are being received by our Kafka Streams listener. Hello, today Im going to talk about this pretty complex topic of Apache Kafka Streams Processor API (https://docs.confluent.io/current/streams/developer-guide/processor-api.html). All of this happens independently of the request that modified the database, keeping those requests resilient. Meet our team here and check out our open jobs on careers.getyourguide.com, GIVEAWAY ALERT Win an ultimate 48-hour Vatican experience for one lucky winner and a guest of their choice, Enter, Building A Career in UX and The Importance of Trusting our Instincts, Collaboration and Growth: 2022 Engineering Manager Summit, How the Coordination Team Keeps Recruitment Flowing. Well cover examples of various inputs and outputs below. Also, we expect the updates to be in near real-time. At Wingify, we have used Kafka across teams and projects, solving a vast array of use cases. The provided You probably noticed a weird name here &stream-builder-requestListener . Before we go into the source code examples, lets cover a little background and also a screencast of running through the examples. Real Life Use Cases with Kafka Streams The result of the aggregation step is a KTable object and is persisted and replicated for fault tolerance with a compacted Kafka changelog topic. If you continue browsing the site, you agree to the use of cookies on this website. We should also implement a logic for reaching the cap and flushing the changes. Notice in the test class we are passing two records with the value of MN now. `count` is a stateful operation which was only used to help test in this case. In our case the value is a string of comma-separated language codes, so our merge function will return a string containing the union of the old and new language codes. In this case, you would need state to know what has been processed already in previous messages in the stream in order to keep a running tally of the sum result. This blog post is an account of the issues we faced while working on the Kafka Streams based solution and how we were able found a way around them. Conversely,lets say you wish to sum certain valuesin the stream. How can we guarantee this when the database and our job queue can fail independently of each other? TransformerSupplier) is applied to each input record and In order to make our CustomProcessor to work, we need to pre-create our state store. #transformValues(ValueTransformerSupplier,String)). Recently, the team was tasked with providing up-to-date aggregations of catalog data to be used by the frontend of the GetYourGuide website. And I really liked the processor API! To populate the outbox table, we created a Hibernate event listener that notes which relevant entities got modified in the current transaction. His team's mission is to develop the services that store our tours and activities' core data and further structure and improve the quality of that data. Love podcasts or audiobooks? These source code samples are taken from different open source projects. Surprisingly, it comes from the name of our method annotated with @StreamListener i.e. This ensures we only output at most one record for each key in any five-minute period. Heres a pretty good option Kafka Streams course on Udemy. The data for a single activity is sourced from over a dozen database tables, any of which might change from one second to the next, as our suppliers and staff modify and enter new information about our activities. In software, the fastest implementation is one that performs no work at all, but the next best thing is to have the work performed ahead of time. Kafka Streams Transformations provide the ability to perform actions on Kafka Streams such as filtering and updating values in the stream. Culture & PeopleCustomer ServiceData Science Diversity & InclusionEngineering ManagementEventsFinance & LegalLeadershipMarketingProduct & DesignRecruiting & Talent DevelopmentRelocation 101Sales & SupplyTech & EngineeringWorking at GetYourGuide. As an aside, we discovered during testing that with enough concurrency, the writes to the outbox table would cause deadlocks in MySQL. The outbox pattern is a good fit for this task. Schedule actions to occur at strictly regular intervals(wall-clock time) and gain full control over when records are forwarded to specific Processor Nodes. Bravo Six, Going Realtime. A #pr, Group the records of this KStream on a new key that is selected using the All the source code is available frommyKafka Streams Examples repo on Github. F, The Font class represents fonts, which are used to render text in a visible way.

This will allow us to test the expected `count` results. if the instance goes down, it will not get rebalanced among other listening instances from the same group, only the original data (pre-transform) will. Hence, they are stored on the Kafka broker, not inside our service. To maintain the current state of processing the input and outputs, Kafka Streams introduces a construct called a State Store. Operations such as aggregations such as the previous sum example and joining Kafka streams are examples of stateful transformations. We also need a map holding the value associated with each key (a KeyValueStore). With an empty table, MySQL effectively locks the entire index, so every concurrent transaction has to wait for that lock.We got rid of this kind of locking by lowering the transaction isolation level from MySQL's default of REPEATABLE READ to READ COMMITTED. The `branch` function is used to split a KStream by the supplied predicates into one of more KStream results. When we return null in the method, nothing gets flushed. instance. This is All rights reserved. As a benefit this also got rid of other occasional locking issues we had encountered in our service. What we wanted to do for the recordings feature was quite similar. Today, we will implement a stateful transformer, so we could utilize as much available features as possible. VisitorProcessor implements the init(), transform() and punctuate() methods of the Transformer and Punctuator interface. If you start the application, everything should boot up correctly with no errors. The transform() method is where we accept a record from the input topic. Processor KSTREAM-TRANSFORM- has no access to StateStore counterKeyValueStore as the store is not connected to the processor

- Cps Blackmax Flaring Tool

- Average Carat Size Engagement Ring 2022

- Pondhero Sludge Muncher Pond Vacuum

- Diggs Revol Dog Crate Medium

- Agenda Cover Designer