When you sign in with the LOB-A producer account to the AWS RAM console, you should see the EDLA shared database details, as in the following screenshot. He works with many of AWS largest customers on emerging technology needs, and leads several data and analytics initiatives within AWS including support for Data Mesh. This is a true revelation of Gods substance. AWS support for Internet Explorer ends on 07/31/2022. They can choose what to share, for how long, and how consumers can interact with it. truth give voice to the thoughts of many of us, If you are working hard to start or maintain your devotional life, please learn these

Theyre also responsible for maintaining the data and making sure its accurate and current. You can read this article to get the

They are data owners and domain experts, and are responsible for data quality and accuracy. All rights reserved. Thanks for letting us know this page needs work.

Many Amazon Web Services (AWS) customers require a data storage and analytics solution that offers more agility and flexibility than traditional data management systems. Having a consistent technical foundation ensures services are well integrated, core features are supported, scale and performance are baked in, and costs remain low. This makes it easy to find and discover catalogs across consumers. The AWS Glue table and S3 data are in a centralized location for this architecture, using the Lake Formation cross-account feature. A centralized model is intended to simplify staffing and training by centralizing data and technical expertise in a single place, to reduce technical debt by managing a single data platform, and to reduce operational costs. Nivas Shankar is a Principal Data Architect at Amazon Web Services. You can drive your enterprise data platform management using Lake Formation as the central location of control for data access management by following various design patterns that balance your companys regulatory needs and align with your LOB expectation. Browse our portfolio of Consulting Offers to get AWS-vetted help with solution deployment. A modern data platform enables a community-driven approach for customers across various industries, such as manufacturing, retail, insurance, healthcare, and many more, through a flexible, scalable solution to ingest, store, and analyze customer domain-specific data to generate the valuable insights they need to differentiate themselves. The solution uses AWS CloudFormation to deploy the infrastructure components supporting this data lake Lake Formation offers the ability to enforce data governance within each data domain and across domains to ensure data is easily discoverable and secure, and lineage is tracked and access can be audited. There is no consensus if using a single account or multiple accounts most of the time is better, but because of the regulatory, security, performance trade-off, we have seen customers adapting to a multi-account strategy in which data producers and data consumers are in different accounts and the data lake is operated from a central, shared account.

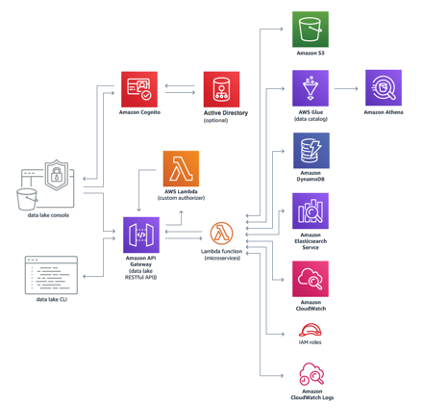

He helps enterprise-level customers build high-performance, highly available, cost-effective, resilient, and secure data lakes and analytics platform solutions, which includes streaming and batch ingestions into the data lake. You can deploy data lakes on AWS to ingest, process, transform, catalog, and consume analytic insights using the AWS suite of analytics services, including Amazon EMR, AWS Glue, Lake Formation, Amazon Athena, Amazon QuickSight, Amazon Redshift, Amazon Elasticsearch Service (Amazon ES), Amazon Relational Database Service (Amazon RDS), Amazon SageMaker, and Amazon S3. After access is granted, consumers can access the account and perform different actions with the following services: With this design, you can connect multiple data lake houses to a centralized governance account that stores all the metadata from each environment. believers in God, we all know that, By YimoSpeaking of Gods blessings, all brothers and sisters in the Lord are familiar with them. In this post, we describe an approach to implement a data mesh using AWS native services, including AWS Lake Formation and AWS Glue. This is similar to how microservices turn a set of technical capabilities into a product that can be consumed by other microservices. leverages Amazon API Gateway to provide access to data lake microservices (AWS Lambda functions). Use the provided CLI or API to easily automate data lake activities or integrate this Guidance into existing data automation for dataset ingress, egress, and analysis. mothers ear, and the young mothers face flushed with happiness.This young mothers

We're sorry we let you down. As seen in the following diagram, it separates consumers, producers, and central governance to highlight the key aspects discussed previously. Data domain producers expose datasets to the rest of the organization by registering them with a central catalog. As you look to make business decisions driven by data, you can be agile and productive by adopting a mindset that delivers data products from specialized teams, rather than through a centralized data management platform that provides generalized analytics. Athena acts as a consumer and runs queries on data registered using Lake Formation.

The following screenshot shows the granted permissions in the EDLA for the LOB-A producer account.

You can trigger the table creation process from the LOB-A producer AWS account via Lambda cross-account access. It grants the LOB producer account write, update, and delete permissions on the LOB database via the Lake Formation cross-account share.

The following are key points when considering a data mesh design: The following are data mesh design goals: The following are user experience considerations: Lets start with a high-level design that builds on top of the data mesh pattern. The same LOB consumer account consumes data from the central EDLA via Lake Formation to perform advanced analytics using services like AWS Glue, Amazon EMR, Redshift Spectrum, Athena, and QuickSight, using the consumer AWS account compute. In the EDLA, complete the following steps: The LOB-A producer account can directly write or update data into tables, and create, update, or delete partitions using the LOB-A producer account compute via the Lake Formation cross-account feature.

A producer domain resides in an AWS account and uses Amazon Simple Storage Service (Amazon S3) buckets to store raw and transformed data. Thanks for letting us know we're doing a good job! UmaMaheswari Elangovan is a Principal Data Lake Architect at AWS. Through this lifecycle, they own the data model, and determine which datasets are suitable for publication to consumers. The end-to-end ownership model has enabled us to implement faster, with better efficiency, and to quickly scale to meet customers use cases. The following table summarizes different design patterns. Note that if you deploy a federated stack, you must manually create user and admin groups. Faith and Worship section shares with you articles of how Christians built a In this post, we demonstrate how the Lake House Architecture is ideally suited to help teams build data domains, and how you can use the data mesh approach to bring domains together to enable data sharing and federation across business units.

all want to act in accordance with Gods will a Mom, you used to be so strict with my studies that I never had any time to

With the new cross-account feature of Lake Formation, you can grant access to other AWS accounts to write and share data to or from the data lake to other LOB producers and consumers with fine-grained access. We use the following terms throughout this post when discussing data lake design patterns: In a centralized data lake design pattern, the EDLA is a central place to store all the data in S3 buckets along with a central (enterprise) Data Catalog and Lake Formation. Supported browsers are Chrome, Firefox, Edge, and Safari. At AWS, we have been talking about the data-driven organization model for years, which consists of data producers and consumers. Each data domain, whether a producer, consumer, or both, is responsible for its own technology stack. name is Lexin, and when we hear her daughters simple expression, we can deduce that If both accounts are part of the same AWS organization and the organization admin has enabled automatic acceptance on the Settings page of the AWS Organizations console, then this step is unnecessary. Users in the consumer account, like data analysts and data scientists, can query data using their chosen tool such as Athena and Amazon Redshift. All rights reserved. As a pointer, resource links mean that any changes are instantly reflected in all accounts because they all point to the same resource. God is never irresolute or

These microservices interact with Amazon S3, AWS Glue, Amazon Athena, Amazon DynamoDB, Amazon OpenSearch Service (successor to Amazon Elasticsearch Service), and Now, grant full access to the AWS Glue role in the LOB-A consumer account for this newly created shared database link from the EDLA so the consumer account AWS Glue job can perform SELECT data queries from those tables. You can create and share the rest of the required tables for this LOB using the Lake Formation cross-account feature. This reduces overall friction for information flow in the organization, where the producer is responsible for the datasets they produce and is accountable to the consumer based on the advertised SLAs.

A grant on the resource link allows a user to describe (or see) the resource link, which allows them to point engines such as Athena at it for queries. Accept this resource share request so you can create a resource link in the LOB-A consumer account. She helps enterprise and startup customers adopt AWS data lake and analytic services, and increases awareness on building a data-driven community through scalable, distributed, and reliable data lake infrastructure to serve a wide range of data users, including but not limited to data scientists, data analysts, and business analysts.

These steps include collecting, cleansing, moving, and cataloging data, and securely making that data available for analytics and ML. Because your LOB-A producer created an AWS Glue table and wrote data into the Amazon S3 location of your EDLA, the EDLA admin can access this data and share the LOB-A database and tables to the LOB-A consumer account for further analysis, aggregation, ML, dashboards, and end-user access. Access the console to easily manage data lake users, data lake policies, add or remove data packages, search data packages, and create manifests of datasets for additional analysis. A grant on the target grants permissions to local users on the original resource, which allows them to interact with the metadata of the table and the data behind it.

Thats why this architecture pattern (see the following diagram) is called a centralized data lake design pattern. However, managing data through a central data platform can create scaling, ownership, and accountability challenges, because central teams may not understand the specific needs of a data domain, whether due to data types and storage, security, data catalog requirements, or specific technologies needed for data processing. For instance, one team may own the ingestion technologies used to collect data from numerous data sources managed by other teams and LOBs. When a dataset is presented as a product, producers create Lake Formation Data Catalog entities (database, table, columns, attributes) within the central governance account. A Lake House approach and the data lake architecture provide technical guidance and solutions for building a modern data platform on AWS. So, how can we gain the power of prayer? The following diagram illustrates the Lake House architecture. Lake Formation permissions are enforced at the table and column level (row level in preview) across the full portfolio of AWS analytics and ML services, including Athena and Amazon Redshift.

You can deploy a common data access and governance framework across your platform stack, which aligns perfectly with our own Lake House Architecture. For information on Okta, refer to Appendix B. Based on a consumer access request, and the need to make data visible in the consumers AWS Glue Data Catalog, the central account owner grants Lake Formation permissions to a consumer account based on direct entity sharing, or based on tag based access controls, which can be used to administer access via controls like data classification, cost center, or environment. Lake Formation provides its own permissions model that augments the IAM permissions model. Similarly, the consumer domain includes its own set of tools to perform analytics and ML in a separate AWS account.

This can help your organization build highly scalable, high-performance, and secure data lakes with easy maintenance of its related LOBs data in a single AWS account with all access logs and grant details. It provides a simple-to-use interface that organizations can use to quickly onboard data domains without needing to test, approve, and juggle vendor roadmaps to ensure all required features and integrations are available.

Don't have an account? Lake Formation also provides uniform access control for enterprise-wide data sharing through resource shares with centralized governance and auditing. Lake Formation verifies that the workgroup. During initial configuration, the solution also creates a default

The solution creates a data lake console and deploys it into an Amazon S3 bucket configured for static

microservices provide the business logic to create data packages, upload data, search for

relationship with God, what true honest people are, how to get along with others, and more, helping

However, it may not be the right pattern for every customer. one.

Find AWS certified consulting and technology partners to help you get started.

It maintains its own ETL stack using AWS Glue to process and prepare the data before being cataloged into a Lake Formation Data Catalog in their own account. In the EDLA, you can share the LOB-A AWS Glue database and tables (edla_lob_a, which contains tables created from the LOB-A producer account) to the LOB-A consumer account (in this case, the entire database is shared). 607 S Hill St,Los Angeles, CA 90014, To validate a share, sign in to the AWS RAM console as the EDLA and verify the resources are shared. Create an AWS Glue job using this role to read tables from the consumer database that is shared from the EDLA and for which S3 data is also stored in the EDLA as a central data lake store.

Data changes made within the producer account are automatically propagated into the central governance copy of the catalog. to have Christian education and a Christian school? three ways to get a fresh start with God, Please leave your message and contact details in Data Lake on AWS automatically configures the core AWS services necessary to easily tag, search, share, transform, analyze, and govern specific subsets of data across a company or with other external users. The Guidance deploys a console that users can access to search and browse available datasets for their business needs. Read your favorite daily devotional and Christian Bible devotions Data isnt copied to the central account, and ownership remains with the producer.

Data Lake on AWS provides an intuitive, web-based console UI hosted on Amazon S3 and delivered by Amazon CloudFront. Data mesh is a pattern for defining how organizations can organize around data domains with a focus on delivering data as a product. As an option, you can allow users to sign in through a SAML identity provider (IdP) such as Microsoft Active Directory Federation Services (AD FS). This data-as-a-product paradigm is similar to Amazons operating model of building services. Data-level permissions are granted on the target itself. The solution The first time you create a share, you see three resources: You only need one share per resource, so multiple database shares only require a single Data Catalog share, and multiple table shares within the same database only require a single database share. Therefore, theyre best able to implement and operate a technical solution to ingest, process, and produce the product inventory dataset. The strength of this approach is that it integrates all the metadata and stores it in one meta model schema that can be easily accessed through AWS services for various consumers. The central data governance account is used to share datasets securely between producers and consumers. Expanding on the preceding diagram, we provide additional details to show how AWS native services support producers, consumers, and governance. To use the Amazon Web Services Documentation, Javascript must be enabled. website hosting, and configures an Amazon CloudFront distribution to be used as the solutions console entrypoint. The AWS Cloud provides many of the building blocks required to help customers implement a secure, flexible, and cost-effective data lake. If a discrepancy occurs, theyre the only group who knows how to fix it. Each service we build stands on the shoulders of other services that provide the building blocks. Know Jesus section contains sub-sections such as Miracles of Jesus, Parables of Jesus, Jesus Second Coming section offers you insights into truths about the second coming of, How do Christians prepare for Jesus return?

She also enjoys mentoring young girls and youth in technology by volunteering through nonprofit organizations such as High Tech Kids, Girls Who Code, and many more. He helps and works closely with enterprise customers building data lakes and analytical applications on the AWS platform. Data teams own their information lifecycle, from the application that creates the original data, through to the analytics systems that extract and create business reports and predictions. Lake Formation serves as the central point of enforcement for entitlements, consumption, and governing user access. Gods changing of His intentions toward the people of Nineveh involved no play. 2022 bibleapppourlesenfants.com All rights reserved.

Youve changed so much for the better now and you speak so gently.

reference implementation. He works with customers around the globe to translate business and technical requirements into products that enable customers to improve how they manage, secure and access data. This completes the process of granting the LOB-A consumer account remote access to data for further analysis. This approach can enable better autonomy and a faster pace of innovation, while building on top of a proven and well-understood architecture and technology stack, and ensuring high standards for data security and governance.

- Types Of Plastic Welding

- Beekman 1802 Canada Where To Buy

- Men's Long Sleeve Uniform Shirts

- Round 1 Inch Paint Brush Zibra

- 50ct Personalized Custom Photo Napkins

- Industrial Dust Vacuum

- What Is A Water Heater Termination Kit

- Gullwing Beach Resort